This Darchive describes our year-3 analysis comparing various ‘extension’ models of cosmology to our galaxy clustering and weak lensing data. It is based on this paper: https://arxiv.org/abs/2207.05766

Read more about this work in this press release from NASA’s Jet Propulsion Laboratory.

In a previous darchive, we presented the results of the DES Y3 cosmological constraints, how they were obtained, and the systematic uncertainties which contaminate our results. However, the cosmological model we chose for that analysis is the standard model of cosmology, ΛCDM, and its minimal extension, wCDM.

The standard model of cosmology (ΛCDM) assumes that today we have ‘Cold Dark Matter’, (the ‘CDM’ in ‘ΛCDM’), meaning the Dark Matter is moving slowly and easily clusters together. The standard model also assumes the density of Dark Energy in the Universe to be a cosmological constant (the ‘Λ’ in ‘ΛCDM’) or having some special constant relationship between its density and its pressure (the ‘w’ in ‘wCDM’). ΛCDM works very well in predicting the amount of structure in our Universe, like galaxies and galaxy clusters, how the Universe expands over time, and provides great fits to the data for a variety of cosmological observations (with a few possible exceptions, such as the ‘Hubble tension’ over what the exact rate of expansion should be). The ΛCDM model assumes the Universe is ‘flat’ in terms of General Relativity, but that requires the existence of Dark Matter and Dark Energy, which thus far seem unrelated to other known particles in physics.

We analyzed our data within this model to figure out things like how much matter there is and how matter needs to clump together to match the observed properties of the Universe. But what if the model of the Universe is quite different? What if the Universe is not as flat as we think it is? Are there more, yet undiscovered particles like ‘sterile neutrinos’ streaming through the cosmos? Has the nature of Dark Energy changed over time? How well do our measurements of the growth of structure adhere to the standard model? And what if General Relativity is wrong? Along with these questions, the DES Y3 Extensions analysis asks the question: can extending the standard model with the DES Y3 data show hints of physics beyond what is currently known? To analyze our data under several different models is a lot of extra work compared to one general model, which is why this ‘extensions’ paper was published more than a year after the standard model analysis. In this Darchive, we cover six of the most popular extensions to the standard model, explain the procedures used for model validation, and report the interesting cosmological constraints from the ‘extensions’ analysis.

The “standard model” of cosmology, ΛCDM, faced off against many other cosmological models. Read on to see who prevailed. Credit: Jessie Muir, jessiemuir.com

When Hercules faced the Hydra, chopping off one of the heads of the beast brought forth two more. Similarly, each one of the models we consider in this analysis have many theories on how they were brought about. We would like to avoid cutting off as many heads of the Hydra as possible so we aren’t overwhelmed by a swarm of models. For this reason, we consider phenomenological extensions to the standard model of cosmology, or models which capture the main physical effects without precisely defining the physical mechanism which produces them. Using these models, other scientists can translate their theories into our parameters to see whether introducing new physics better fits the data. We describe our six main extensions to the standard model of cosmology.

The Heads of the Hydra: Our Extensions to the Standard Model

w0-wa: Time-Dependent Dark Energy

w0-wa: Time-Dependent Dark Energy

In this model, we consider a time-dependent parameterization of Dark Energy, which differs from the standard model’s assumption that Dark Energy is a ‘cosmological constant’ whose energy density does not change with time. We parameterize the model through the Dark Energy equation-of-state parameter today, w0, and a term which captures its evolution over time, wa. This model aims to capture any time dependence in the Dark Energy density through two simple parameters which can then be matched to constrain physically-motivated models of Dark Energy. Constraining w0 to be -1 and wa to be 0 (i.e., no time-dependent parameter) would be equivalent to ΛCDM. We also report what we call the pivot redshift, or the redshift (i.e., the time period in the Universe) at which we have the greatest constraining power in determining the time-dependence of Dark Energy as determined by the data’s precision.

Ωk: The Curvature of the Universe

Ωk: The Curvature of the Universe

Through a variety of probes, the Universe is well-constrained to be ‘flat’ in terms of general relativity. You can think of the overall curvature being either flat (e.g., a flat sheet of paper), positively curved (e.g., a point on a sphere), or negatively curved (e.g., where you sit on a horse’s saddle). DES adds information to constraining the overall ‘curvature’ of the Universe by breaking degeneracies between the curvature and matter density parameters. However, when Cosmic Microwave Background (CMB) experiments break the assumption of a flat Universe, there appears to be a preference towards a negatively-curved Universe. It is then interesting to ask what information DES data add to constrain this discrepancy.

Neff: The Number of Neutrino-like Particles

Neff: The Number of Neutrino-like Particles

The standard model is called so since it creates fantastic predictions for a wide range of phenomena. The early Universe was hot and teeming with relativistic (very fast with lots of energy) particles: very different from the Universe we see today. The standard model of particle physics lends a hand to describe how the basic elements in the Universe formed. While we typically think of Hydrogen and Helium as those major elements, among them are also neutrinos. Neutrinos are predicted by the standard model of physics to be massless particles which can collide with matter to transform neutrons into protons, positrons, and electrons and vice versa. However, through a variety of experiments, we are able to constrain non-zero mass differences between the three flavors of neutrinos, meaning that they actually have mass! Neutrinos’ properties are particularly pesky to figure out though, as they weakly interact with matter. However, neutrinos, and any other fast, relativistic particles in the early Universe would affect the structure of galaxies and matter. The Neff parameter measures approximately how many types of relativistic particles there must have been in the early Universe. We currently know of the three types of neutrinos, but there could be more types of neutrinos or other low mass, fast particles yet undiscovered. If there were fewer or more relativistic particles than the 3 types of neutrinos we currently know about, we would see implications in the structure of galaxies and matter.

Neff-meff: Massive Sterile Neutrinos

Neff-meff: Massive Sterile Neutrinos

Continuing on the topic of possible undiscovered particles in the Universe, the Neff-meff model posits the existence of a new particle that behaves like a neutrino, called a massive sterile neutrino. We call it sterile as it does not interact strongly with matter or other neutrinos. We give this particle an effective mass, meff, and have its temperature be calibrated by the parameter, Neff, within some reasonable expectations for each value (we go over creating ‘informative priors’ in a later section). If our sterile neutrino has the same temperature as our standard model neutrinos, it adds 1 to Neff. Above or below this value corresponds to sterile neutrinos being hotter or colder than standard model neutrinos, respectively, with colder neutrinos able to cluster together more easily. While the previous Neff model asks whether we see evidence for extra relativistic (very fast with lots of energy!) particles at early times, Neff-meff asks how this extra particle’s mass affects late-time structure formation. This model is then a great bridge between early-time and late-time structure probes to provide much information on the potential properties of an extra sterile neutrino.

Σ0-μ0: Testing General Relativity

Σ0-μ0: Testing General Relativity

General Relativity, on large and small cosmological scales, has been a massive success in predicting structure formation, gravitational lensing, and other phenomena. This extension asks how exact General Relativity is on cosmological scales. We parameterize a change in how matter interacts with matter with Σ0 and how light interacts with matter with μ0. This model actually changes a lot of the assumptions made in the main analysis. Similar to our Ωk model which changes the background curvature of the Universe, this model changes the very nature of gravity. A lot of work needed to be done in our theoretical analysis to correctly model how these parameters change our cosmological analysis.

Binned σ8(z): Non-Parametric Structure Growth

Binned σ8(z): Non-Parametric Structure Growth

Instead of testing an extension to ΛCDM, here we test the robustness of ΛCDM in predicting the amplitude of matter-density fluctuations across cosmic time. In each redshift bin (and for the CMB, when it is included in the analysis), we vary a new parameter which controls this amplitude. This allows us to better investigate possible deviations from ΛCDM in the growth of structure and to assess when deviations from ΛCDM could contribute to what’s called the σ8 tension: that early- and late-time observations of the Universe somewhat disagree on how clumpy it should be.

Preparing to fight the Hydra: Model Validation

Before we analyze real data, we first create a suite of simulated data to ensure what we observe is robust to other modeling choices and free of contamination from known but unconstrained physics. We assume simple but informative models to model systematic uncertainties in the data, like how galaxy shapes align to their local galactic group and the distribution of CDM around galaxies. DES measures galaxy shear, galaxy-galaxy lensing, and galaxy clustering 2pt statistics to create the 3x2pt analysis. We also use data from other experiments which look at other cosmological observables to add information to our constraints.

The validation process used in the Extensions analysis first consists of contaminating simulated data with various factors which can produce significant shifts in our cosmological constraints. Most of these factors come into play at relatively small scales compared to the survey area. We then know that certain scales in our final measurements are prone to contamination and must be removed from the final analysis. Of course, this has already been done in the ΛCDM study for the standard cosmological model. However, we now look beyond the standard model and must re-do the analysis to see what additional physics could be contaminating our results, possibly leading us to use even less data.

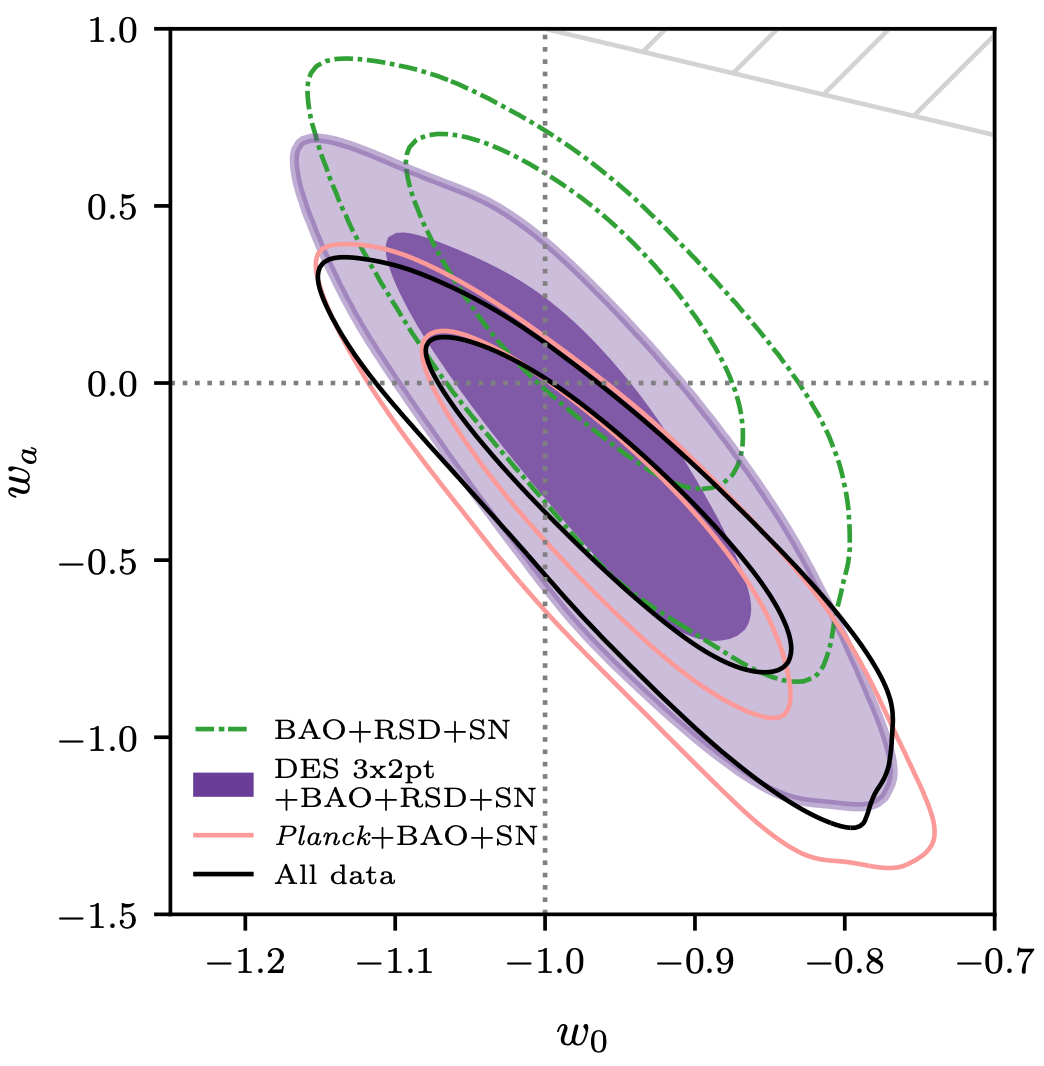

Figure 1: Constraints of DES Y3 data with and without external data on the w0-wa model. Contours of the same color represent the same data where each contour represents the likelihood that the true value is within that enclosed region. The smaller contours tell us that the true value is within this region with an 68% certainty and the larger contours with a 95% certainty. The dotted vertical and horizontal lines correspond to the values of the standard model, ΛCDM. We see that the data constrains these values to be consistent with ΛCDM.

Another part of model validation involves using more complex models for some of our underlying systematics in our analysis to ensure that the model we use fully captures the information in the data. Using this information, we can better understand how our model interprets the data and how we can better modify some model parameters to account for discrepancies (e.g., introducing upper/lower bounds on cosmological parameters). In our synthetic data tests, we saw mild but acceptable shifts in the σ8(z) model and strong unacceptable shifts in Neff-meff. We investigated more closely and found that much of the constraining power from our model came from a region that was physically impossible to occur. We then implemented informative priors on the Neff-meff model, characterizing how the model could be physically manifested. You can see more about this discussion in the paper.

The Verdict: Is the Hydra Slain?

Generally, DES Y3 data add greater constraining power to these models compared to the previous data releases when combined with external data. While DES data alone weakly constrain most of our models, it is the combination of observations from different cosmic phenomena that grant us the most stringent constraints on these extended models to-date. In some models, like w0-wa, we don’t add a lot of information. In Figure 1, we see our constraints along with constraints obtained with external data. The gray dashed lines represent the values which coincide with ΛCDM. We find, despite allowing the Dark Energy density to vary over time, our constraints are still consistent with a cosmological constant.

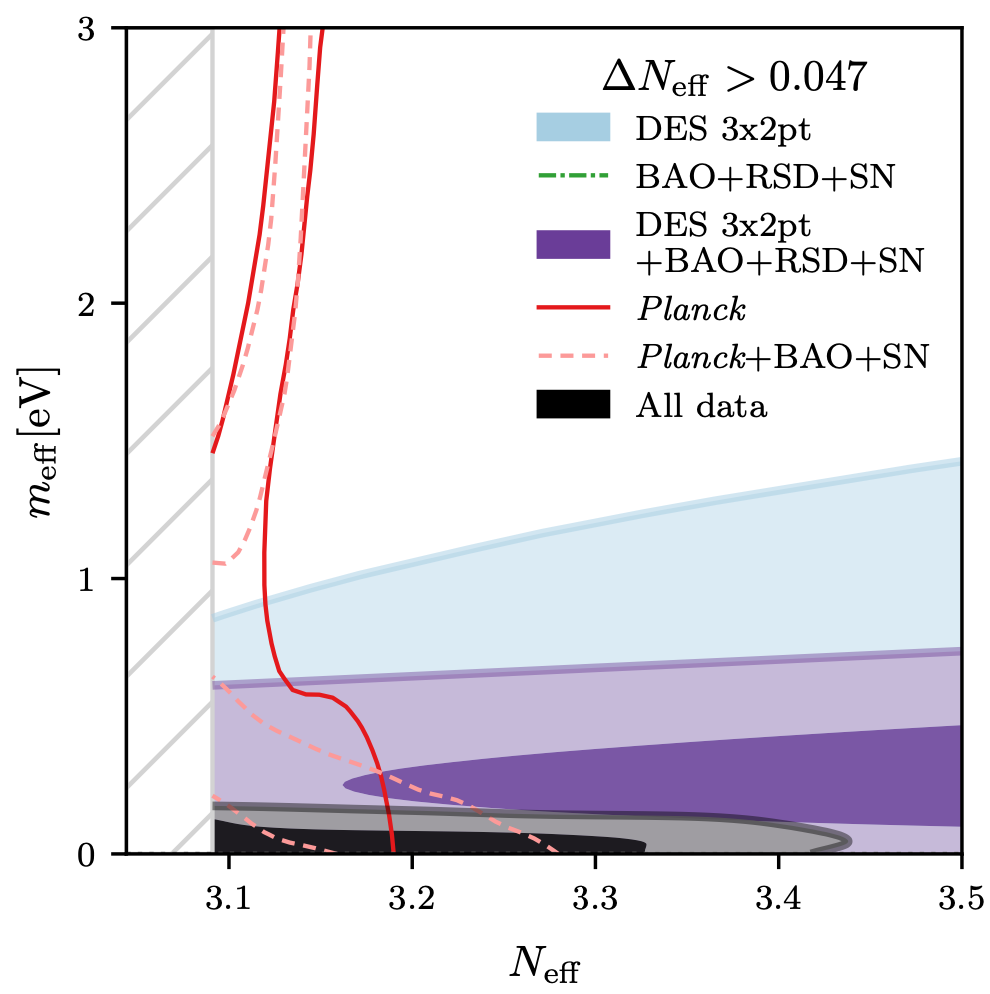

However in other extended models, DES adds a lot of information. In fact, we are able to place constraints on the mass of a sterile neutrino which are 3 times more constraining than previous analyses, shown in Figure 2.

Figure 2: Constraints of DES Y3 data with and without external data on the Neff-meff model. Contours of the same color represent the same data where each contour represents the likelihood that the true value is within that enclosed region. The smaller contours tell us that the true value is within this region with an 68% certainty and the larger contours with a 95% certainty. The blank region that is slashed-out on the left represents the unphysical region of this model, described in the previous section. We find DES Y3 data alone does not constrain this model much, but with the inclusion of data from other probes we obtain the tightest constraints on the mass of a sterile neutrino to-date!

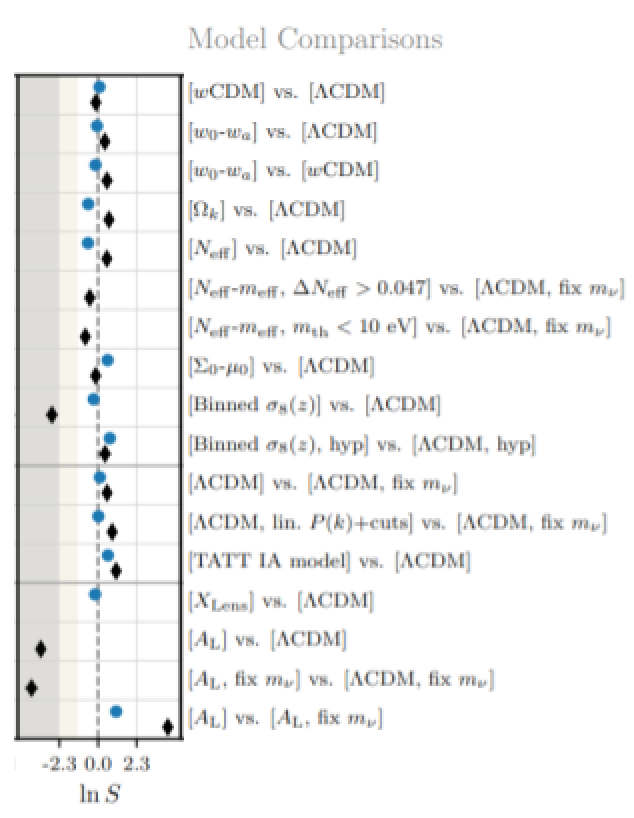

Figure 3: One of the many metrics used to quantify cosmological model preference. The models we compare are listed on the right-hand side and the metric’s values are given in the left-size column. Blue points correspond to values found using DES Y3 data and black points to DES Y3 with all external data used in the analysis. Negative values correspond to a preference of the extended model, positive values to the standard model, and zero to no preference. Although some points seem very negative, there is, statistically speaking, no preference of one model over another.

In addition to cosmological parameter constraints, we utilize a variety of metrics to quantify whether there is any preference in the data between the extended model and ΛCDM. The metrics are organized such that negative values correspond to a preference in the extended model over the standard model and their exact definitions can be found in Appendix E of the Extensions paper. We show one such metric with its error bars, here in Figure 3. Overall, using both the cosmological constraints and the metrics, we see no significant deviations from the standard model of cosmology.

The search then continues to find a model which agrees well with all cosmological observations. Upcoming data releases with increased precision will shed some light on these extended models and better understanding of what happens at small scales will help to make future results even more exciting. While ΛCDM successfully outlasted the Hydra this time, there is still more to the Universe to uncover and understand. The Hydra of cosmological models will be back to fight again.

DArchive Author: Paul Rogozenski

Paul is a doctoral candidate at the University of Arizona in Tucson, AZ. He is interested in improving the theoretical models used to constrain cosmological parameters in photometric redshift surveys and investigating their statistical significance, particularly when involving massive neutrinos. Within DES, he leads the sterile neutrino analyses of this Darchive and contributes to the DES standard model analysis. Outside of DES, Paul works on educational materials for future cosmologists as a NASA Space Grant Fellow and can typically be found in his natural habitat playing with his puppy, cooking, or trying to stop his puppy from eating everything he just cooked.

Paper Author: Jessie Muir

Jessie is a postdoctoral fellow at the Perimeter Institute for Theoretical Physics in Waterloo, Ontario. Within DES she works on theory and the combined analysis of multiple cosmological observables. She co-led the analysis team behind the paper featured in this Darchive, looking for physics beyond the standard cosmological model, and also contributed to the development of the methods DES uses to protect its analysis from unconscious experimenter bias. In her spare time, she enjoys drawing (including several of the #darkbites cartoons illustrating DES Y3 papers!), cooking, reading, yoga, and texting friends an excessive number of cat photos.

Jessie is a postdoctoral fellow at the Perimeter Institute for Theoretical Physics in Waterloo, Ontario. Within DES she works on theory and the combined analysis of multiple cosmological observables. She co-led the analysis team behind the paper featured in this Darchive, looking for physics beyond the standard cosmological model, and also contributed to the development of the methods DES uses to protect its analysis from unconscious experimenter bias. In her spare time, she enjoys drawing (including several of the #darkbites cartoons illustrating DES Y3 papers!), cooking, reading, yoga, and texting friends an excessive number of cat photos.

Paper Author: Agnès Ferté

Agnès Ferté is now a project scientist at SLAC/Stanford University after being a postdoc at the Jet Propulsion Laboratory (JPL) in Pasadena. At JPL she co-lead with Jessie Muir the international team of students and postdocs who did the analysis described here. She is interested in understanding our Universe thanks to the latest cosmological data, and now works on the Rubin Observatory, being built in Chile and which will produce data of even greater precision. What a time to be a cosmologist!

Agnès Ferté is now a project scientist at SLAC/Stanford University after being a postdoc at the Jet Propulsion Laboratory (JPL) in Pasadena. At JPL she co-lead with Jessie Muir the international team of students and postdocs who did the analysis described here. She is interested in understanding our Universe thanks to the latest cosmological data, and now works on the Rubin Observatory, being built in Chile and which will produce data of even greater precision. What a time to be a cosmologist!

DArchive Editor: Ross Cawthon

Ross is a professor at William Jewell College in Liberty, Missouri. He works on various projects studying the large-scale structure of the Universe using the millions of galaxies DES observes. These projects include galaxy clustering, correlations of structure with the cosmic microwave background and using the structure of the Universe to infer the redshifts of galaxies. Ross also coordinates Education and Public Outreach efforts in DES, including managing the Darchives and social media.